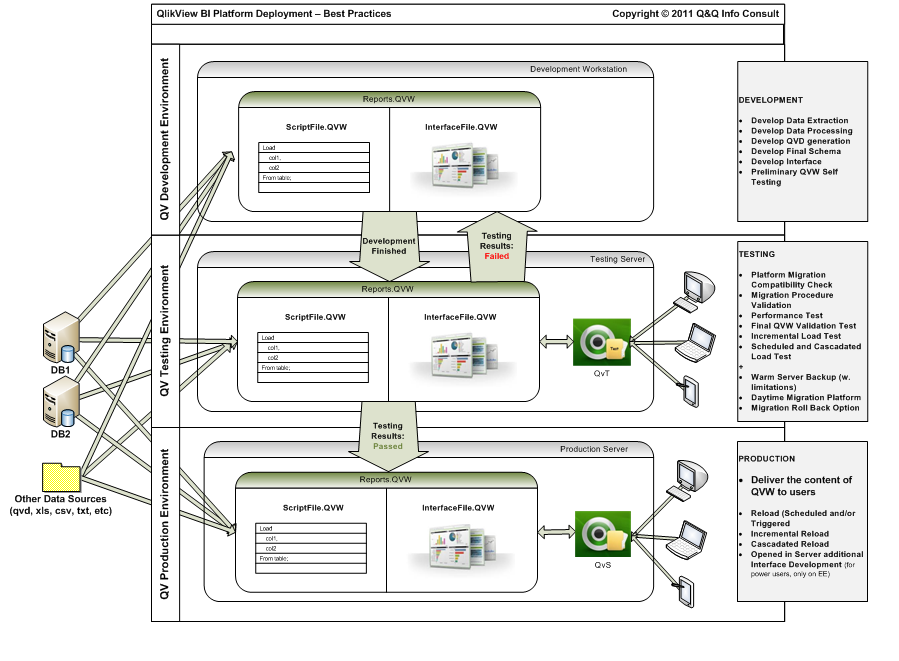

While the Production is clear we should have it (or else we’ve missed the point, right? ![]() ), for the first two stages, some aspects should be covered:

), for the first two stages, some aspects should be covered:

This allows not only version comparison, but also check-in / check-out facilities (avoiding two developers to work in same area and the inherent risk of generating conflicting versions).

2. For the Testing stage, at least 3 perspectives need to be covered:

- testing the new local developments (whole new QVW applications or new parts of existing ones) before launching them into Production (real life usage). Including extensiveing the tests of correct cascading of data staging processes or for the incremental loads;

- testing the new versions and builds of the QlikView™ platform released (several times a year) by Qlik™Tech, before migrating our Production platform to the newest version/build;

- testing the performance capabilities of our platform and our new reports, especially.

***

Assuming there are enough reasons mentioned above to consider the 3 stage approach, the budgetary constraints are still, especially nowadays, a major issue. It is not easy for any kind of organization to double the licensing budgets just to provide this kind of redundancies.

But even under all these constraints, there are good news: the licensing model of QlikView™ is providing a special testing license, named QlikView™ Test Server, that is approx half price of the regular equivalent QlikView™ Server and is providing the same number of CAL licenses as the associated QlikView™ Server (without extra charge for the CALs!).

For the QvS Enterprise Edition this was available for some time already, but there is also now a brand new one, called QvT SBE (Small Business Edition), available since this summer, at a fairly reasonable price point!

***